KDE PIM/Akonadi Next: Difference between revisions

Appearance

< KDE PIM

Cmollekopf (talk | contribs) |

Cmollekopf (talk | contribs) |

||

| Line 58: | Line 58: | ||

== Risks == | == Risks == | ||

* key-value store does not perform with large amounts of data | * key-value store does not perform with large amounts of data | ||

* query performance is | * query performance is not sufficient | ||

* turnaround time for modifications is too high to feel responsive | * turnaround time for modifications is too high to feel responsive | ||

Revision as of 13:36, 8 December 2014

This is WIP!

This is an experimental attempt at building a successor to Akonadi 1. The source code repository is currently on git.kde.org under scratch/aseigo/akonadinext

Current Discussion Points

- storage read API

- data scheme

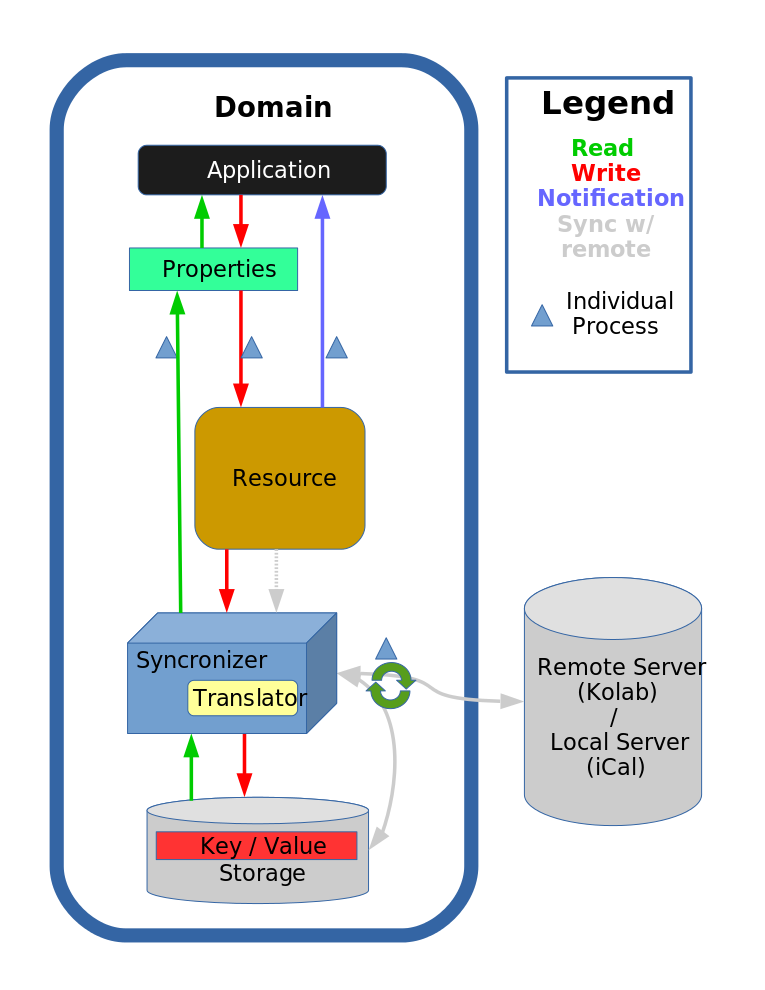

Terminology

- Source: the canonical data set, which may be a remote IMAP server, a local iCal file, a local maildir, etc.

- Store: the local mirror for a given data set

- Syncronizer: the process responsible for modifying and synchronizing the store

- Resource: a set of configuration describing which syncronizer to use with what settings (e.g. server settings, local paths, etc) + a plugin for the client API to access the store.

- Entity: the atomic unit of a store (an email, a calendar item, a contact, a note, a tag...)

- Filter: a component that takes a message and performs some modification of it (e.g. changes the folder an email is in) or processes it in some way (e.g. indexes it)

- Pipeline: a run-time definable set of filters which are applied to a message after a resource has performed a specific kind of function on it (add, update, remove...)

- Query: a well-defined structure for requesting messages from one or more sources that match a given set of constraints

Design

Tradeoffs/Design Decisions

- Key-Value store instead of relational

- + Schemaless, easier to evolve

- - No need to fully normalize the data in order to make it queriable. And without full normalization SQL is not really useful and bad performance wise.

- - We need to maintain our own indexes

- Individual store per resource

- Storage format defined by resource individually

- - Each resource needs to define it's own schema

- + Resources can adjust storage format to map well on what it has to synchronize

- + Synchronization state can directly be embedded into messages

- + Individual resources could switch to another store technology

- + Easier maintenance

- + Resource is only responsible for it's own store and doesn't accidentaly break another resources store

- - Inter-resource moves are both more complicated and more expensive from a client perspective

- + Inter-resource moves become simple additions and removals from a resource perspective

- - No system-wide unique id per message (only resource/id tuple identifies a message uniquely)

- + Stores can work fully concurrently (also for writing)

- Storage format defined by resource individually

- Indexes defined and maintained by resources

- - Relational queries accross resources are expensive (depending on the query perhaps not even feasible)

- - Each resource needs to define it's own set of indexes

- + Flexible design as it allows to change indexes on a per resource level

- + Indexes can be optimized towards resources main usecases

- + Indexes can be shared with the source (IMAP serverside threading)

- Shared domain types as common interface for client applications

- - yet another abstraction layer that requires translation to other layers and maintenance

- + decoupling of domain logic from data access

- + allows to evolve types according to needs (not coupled to specific applicatoins domain types)

Risks

- key-value store does not perform with large amounts of data

- query performance is not sufficient

- turnaround time for modifications is too high to feel responsive

Comments/Thoughts/Questions

Comments/Thoughts:

- Incremental changes can be recorded by message buffer => resource can replay incremental changes

- Each modification message is associated with a specific revision

- Syncronizer can do conflict resolution while writing to the store

- The revision that this change applies to is still available => 3-way merge is possible

- Copying of domain objects may defeat mmapped buffers if individual properties get copied (all data is read from disk). We have to make sure only the pointer gets copied.

- Tags/Relations now need a target resource on creation. This is a change from the current situation where you can just create a tag, and every resource can synchronize it. It requires a bit more work but results also in a more predictable system, which we'll also need if we want to support different tag storage locations (shared tag set in a shared folder).

Open Questions:

- How is lazy loading triggered? Through a synchronize command to the syncronizer?

- Yes, a sync command. So sync commands need to be able to specify a context (which a resource may ignore, of course)

- Resource-to-resource moves (e.g. expiring mail from an imap folder to a local folder)

- Should the "source" resource become a client to the other resource, and drive the process? This would allow greatest atomicity. It does imply being able to issue cross-resource move commands.

- If we use the mmapped buffers to avoid having a property level query API, lazy loading of properties becomes difficult. Currently we specify how much of each item we need using the parts, allowing the lazy loading to fetch missing parts. If we no longer specify what we want we cannot lazy load that part and at the point where the buffer is accessed it's already too late.

- How do we record changes?

- The message adaptor could do that.

- How do we deal with unknown properties on the application side if the storage format doesn't support it yet?

- N adapters have to be updated for a new property in the domain object

- => We could have a base class that stores any unhandled property in a generic key-value part of the message that is implemented by every store.

- How well can we free up memory with mmapped buffers?

- 1000 objects are loaded with minimal access (subject)

- A bunch of attachments are opened => we stream each file to tmp disk and open it.

- Can we unload the no-longer required attachments?

- => I suppose we'd have to munmap the buffer and mmap it again, replacing the pointer.

- This may mean we require a shared wrapper for the pointer so we can replace the pointer everywhere it's used.

- Filtering: If the client-side filtering is implemented as filter, can we still move imap messages to a local folder (since we'd have to move the message to another store, and remove it from the source).

- Datastreaming: It seems with the current message model it is not possible for the resource to stream large payload's directly to disk.

- As a possible solution a resource could decide to store large payloads externally, stream directly to disk, and then pass a message containing the file location to the system.

- How do we deal with mass insertions? => n inserted entities should not result in n update notifications with n updates.

- I suppose simple event compression should largely solve this issue.

Potential Features:

- Undo framework: Application sessions keep data around for long enough that we can rollback to earlier states of the store.

- Note that this would need to work accross stores.

- iTip handling in form of a filter? i.e. a kolab resource could install a filter that automatically dispatches iTip invitations based on the events stored.

Thoughts from Meeting (till, dvratil, ...) that need to be processed:

- Notification hints (what changed), to avoid potentially expensive updates for minor changes. (Only hints though, not authorative changeset, can be optimized for usecases).

- Resorting in kmail is a common operation

- New S/MIME implementation that doesn't support all of PGP would probably suffice

- DVD by email case should be considered

- Hierarchical configuration. Fallback to systemconfig.

- Reupload messages we failed to upload should be possible

- IMAP Idle => we cannot shutdown the resources

- Migration is important (especially pop3 archives, as they cannot redownload)

- Optional Akonadi would be nice (If you have a 100MB quota you may not have 50MB for akonadi).

- For pipelines: i.e. for mail filter we must guarantee that a message is never processed by the same filter twice.

- Do we need i.e. a shared Node/Collection basetype for hierarchies, so we can i.e. query the kolab foldertree containing calendars/notebooks/mailfolders.

Syncronizer

- The synchronization can either:

- Generate a full diff directly on top of the db. The diffing process can work against a single revision, and could even stop writing other changes to disk while the process is ongoing (but doesn't have to due to the revision). It then generates a necessary changeset for the store.

- If the source supports incremental changes the changeset can directly be generated from that information.

The changeset is then simply inserted in the regular modification queue and processed like all other modifications. The synchronizer already know that it doesn't have to replay this changeset to the source, since replay no longer goes via the store.

Useful Resources

- Socket activated processes (for the resource shell): http://0pointer.de/blog/projects/socket-activation.html

Diagrams

Akonadi Next Workflow Draft